pvigier's blog

computer science, programming and other ideas

Simulopolis Alpha 3 Is Released

Today, I release the third alpha version of Simulopolis.

In this post, I will detail a bit what’s new since the initial release.

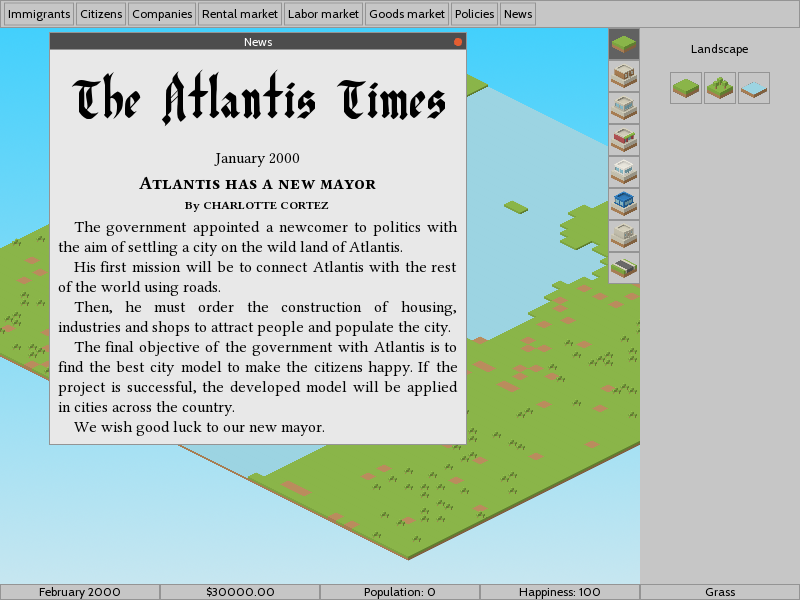

On this screenshot, you can saw some minor changes on the interface and the main new feature: the newspaper.

Tags: simulopolis

3D Perlin Noise With Numpy

Few weeks ago, a professor from the university of Waterloo contacted me to ask if it was possible to adapt my code for 2D Perlin noise to 3D Perlin noise.

I was very happy that someone was interested by this piece of code. After some thoughts, I answer him that it was possible and I decided to do it.

I move the old code which generates 2D Perlin noise in the file perlin2d.py and I place the code described below for 3D Perlin noise in the file perlin3d.py. You can find all the code in this github repo.

Terrain Generation in Simulopolis

Hi!

Today I will describe the generator of terrains I made for Simulopolis.

Here are some screenshots of terrains from the game.

In this article, I will not describe the C++ code used in the game as it is verbose and unnecessarily complicated. Instead, I will use the Python code I used to design the generator.

Indeed, I found much more pleasant to use Python for prototyping as it is really fast to write something which works, there are good libraries and we do not have to wait for compilation every time we make a change. It is especially true for procedural content generation where we have to iterate a lot before obtaining something decent.

The code I will present is the exact translation in Python of what is currently implemented in the game. It is based on the implementation of Perlin noise I described previously in this article. As usual, you can find the whole project on github.

Tags: pcg simulopolis

Distributing a C++ Program on Linux

Hi!

Today I will tackle a problem I faced when I wanted to distribute the alpha version of Simulopolis two weeks ago: distributing a C++ binary on different Linux distributions.

So, two weeks ago, I was happy as I had a version of Simulopolis which ran smoothly on my laptop. And I thought it was time to share it with people to have some feedback. Very naively, I took the binary and the assets, put them on a new folder and uploaded the whole thing on itch.io.

Then, I sent a message to my big brother telling him that he can try my game. He downloaded the game and when he tried to run the game, he obtained this error message:

./Simulopolis: error while loading shared libraries: libboost_serialization.so.1.65.1: cannot open shared object file: No such file or directory

Uhh, this was not expected!

We read the error message, it tells that a dependency I use to build the game is missing on my brother’s system. So he tried to install Boost Serialization, SFML and TinyXML the three libraries I use in Simulopolis. But that did not solve the problem because the program is looking for a specific version of the dependency (here the version 1.65.1 of Boost serialization). And as he was on a different Linux distribution, Fedora, than me, Ubuntu, it was not the same version of Boost Serialization that was available in its package manager.

Tags: cpp linux simulopolis

Simulopolis Alpha Is Released

Today, I’m happy to announce you that the first release of my city building game, Simulopolis, is available online.

It’s been a long and chaotic journey which lasted a bit more than six months.

Tags: simulopolis